Beyond the Black Box

———————Dr.Madhav Hegde MD.

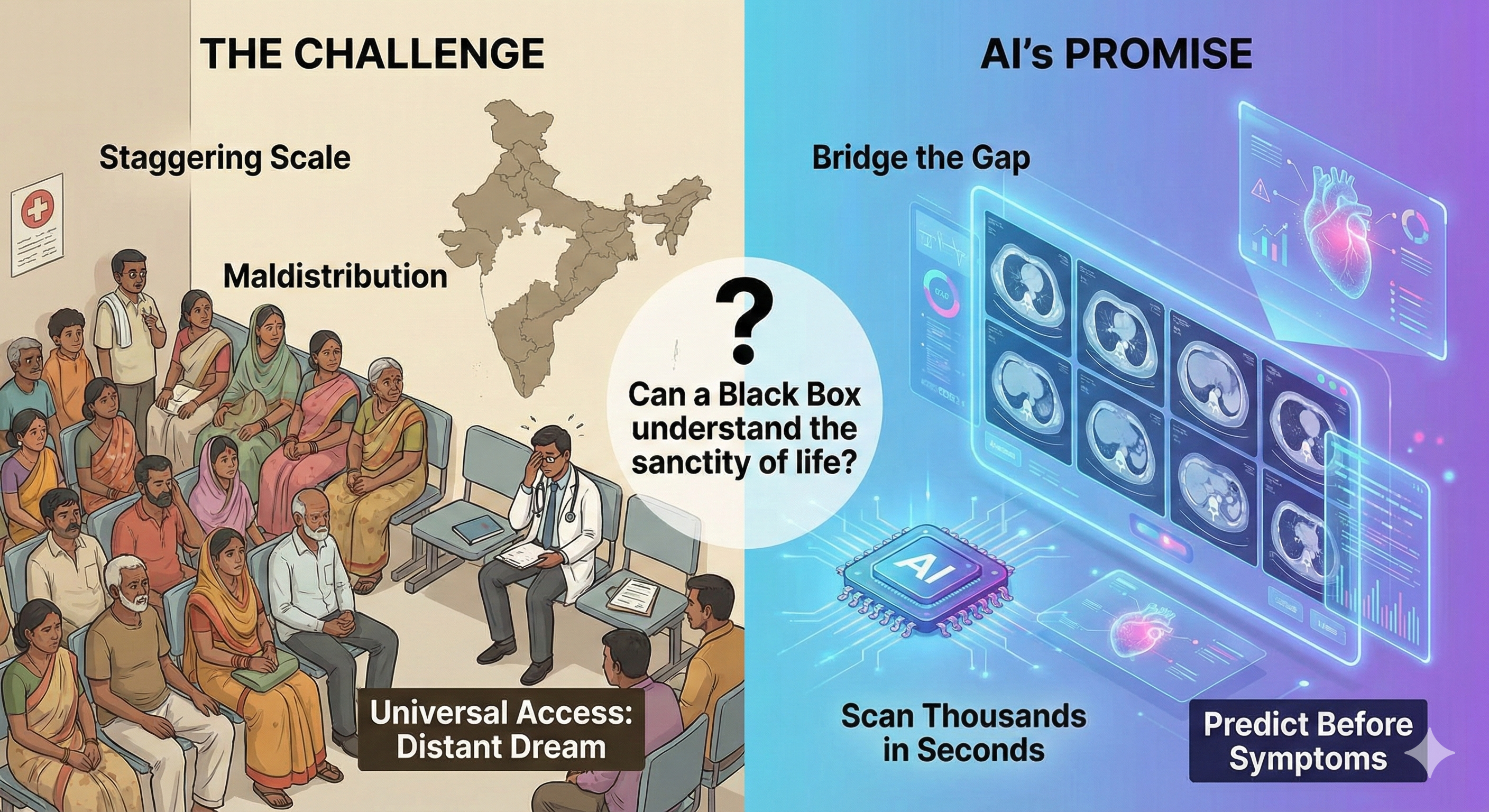

In India, the healthcare challenge is a matter of staggering scale. We face a mal distribution of medical professionals and infrastructure that makes universal access feel like a distant dream. Artificial Intelligence (AI) is a tool powerful enough to bridge that gap—capable of scanning thousands of CT scans in seconds or predicting heart attacks before a patient feels a flutter. But as we hand over the keys of diagnosis to algorithms, a chilling question remains: Can a black box ever truly understand the sanctity of a human life?

What do we need: Total automation or assisted intelligence?

How does AI remain deeply, stubbornly human?

1. The Human-in-the-Loop Strategic Pivot

In many tech sectors, the goal of AI is to remove the "slow" human from the equation. However in healthcare a Human-in-the-Loop (HITL) model, seems a better strategic choice. Assisted intelligence looks better than autonomous intelligence. Technology (AI) is a junior partner, never the lead. This is vital for maintaining the trust of the patient-doctor duo—a relationship that is the bedrock of healing. Patients must have complete autonomy to choose or reject AI technologies.The patient should be provided with both options [AI recommendation and clinician opinion]

2. AI Can See the Invisible .But it Needs a Local Reality Check.

AI can now analyze mammography scans to predict the onset of breast cancer before any visual signs even appear to a human radiologist. It is a literal life-saver, but this superpower without scientific rigor is a liability. A futurist knows that an AI trained in a London lab might have a blind spot for a patient in rural Karnataka. This means an AI cannot be assumed to work in Bengaluru just because it worked in Dublin; it must prove itself to the local population before a single patient is treated.

3. Why Your Best Mental Health Advocate Might Be a Chatbot

For millions of Indians, the social stigma surrounding mental health is a barrier higher than any mountain. An unexpected ally: the non-judgmental chatbot. Because machines don't gossip or judge, they act as a vital bridge for those too inhibited to seek professional help. These aren't just simple scripts. How greatly useful it is to have an AI tool that analyzes subtle cues—tone of voice, facial expressions, and body language—to act as a first step toward self-care. Crucially, for the futurist, these tools aren't just reactive; they have the potential to predict and prevent probable flare-ups of psychiatric conditions, turning mental healthcare from a crisis-response system into a proactive shield.

4. Solving the Black Box with Scientific Plausibility

The biggest hurdle to trust is the Black Box—the phenomenon where an algorithm reaches a correct diagnosis, but no one knows how. It requires Scientific Plausibility. An AI's decision-making must align with established medical science, not just mysterious correlations in the data. This requirement forces a new level of inter-disciplinary collaboration bringing coders and clinicians into the same room to ensure that the machine's logic makes sense to the doctor who ultimately holds the patient's hand.

5. Fighting Data Surplus and the Right to be Forgotten

Patients in India now have the right to be forgotten. This means you can demand your biological data be modified or removed from a database even after giving initial consent. It prevents your most sensitive health secrets from being repurposed for unauthorized research years down the line, ensuring that your digital twin doesn't outlive your privacy.

6. The Accountability Map

When a human doctor errs, the path to justice is well-worn. But who blames the bot?

•Malfunction (Designer/Developer): If the flaw is in the code or the original learning, the manufacturer is liable.

• Defective Implementation (Hospital/Clinician): If the tool was used incorrectly or without proper oversight, the accountability lies with the healthcare provider. If an AI causes injury, responsibility to pay lies with all stakeholders in the chain. By making safety a shared financial and legal burden, the bot is never left to take the blame alone.

--------------------------------------------------------------------------------

The Clinic of 2030

Imagine an Indian clinic in 2030. The infrastructure gap is closing, not because we have ten times the doctors, but because every doctor is AI-augmented. In this future, AI handles the data-heavy lifting, but the human clinician remains the moral compass. The "Human-in-the-Loop" isn't a limitation of our technology; it is the ultimate feature of our healthcare system. As machines become more human in their ability to diagnose, we need to ensure our healthcare remains humane in its treatment of people.

As we step into this high-tech frontier, we must ask: In our race to make machines smarter, are we doing enough to make the systems wiser?